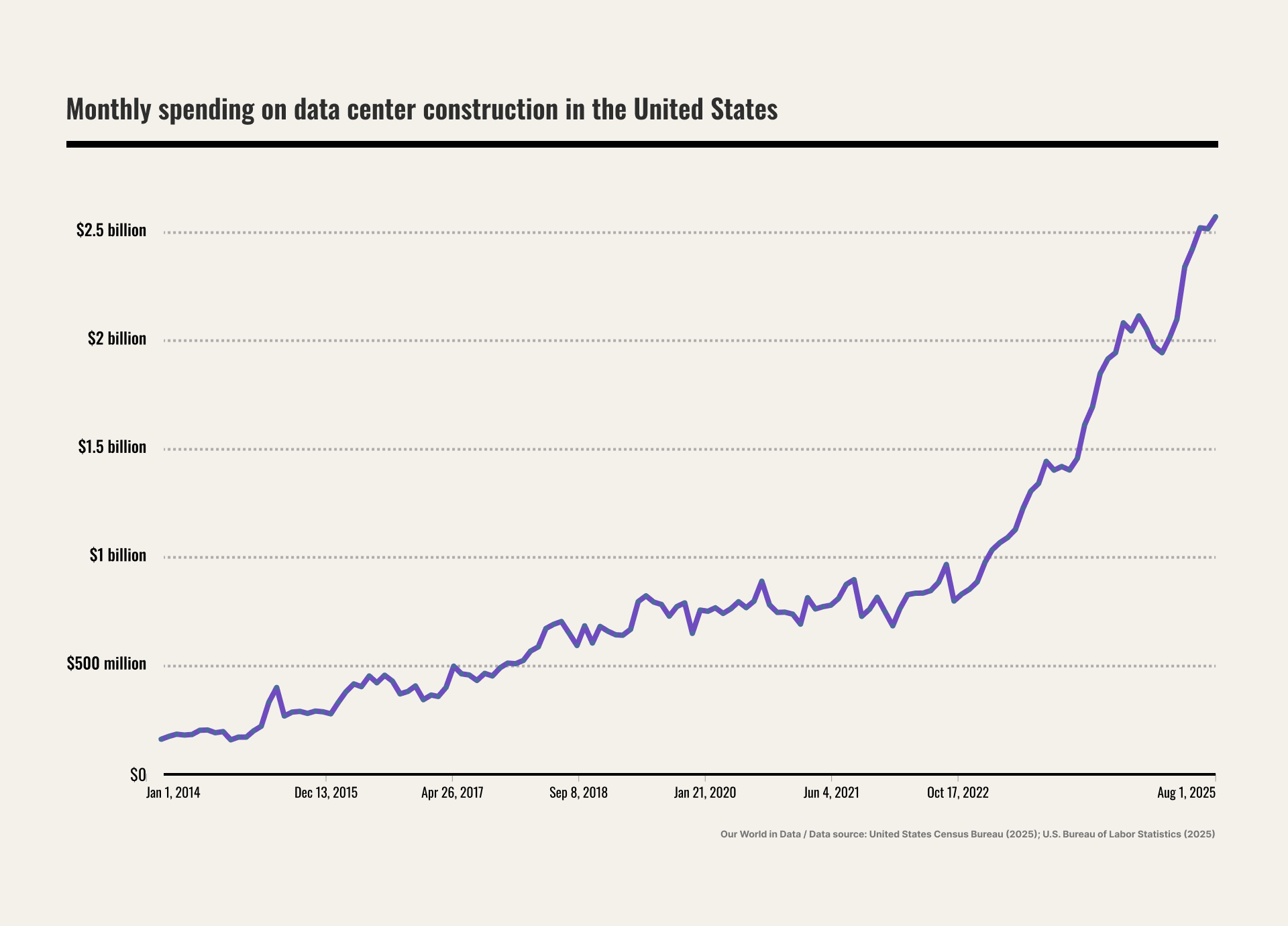

You’ve probably noticed the data center drag race unfolding before our eyes. From smaller regional operators to giants like xAI and Meta, key players vie for the right real estate: enough land for massive facilities, proximity to supply chains and infrastructure, and — perhaps most critically — access to reliable power (a lot of it). The scramble has spilled into local, national, and global politics — fueling community resistance and sharpening debates over sustainability.

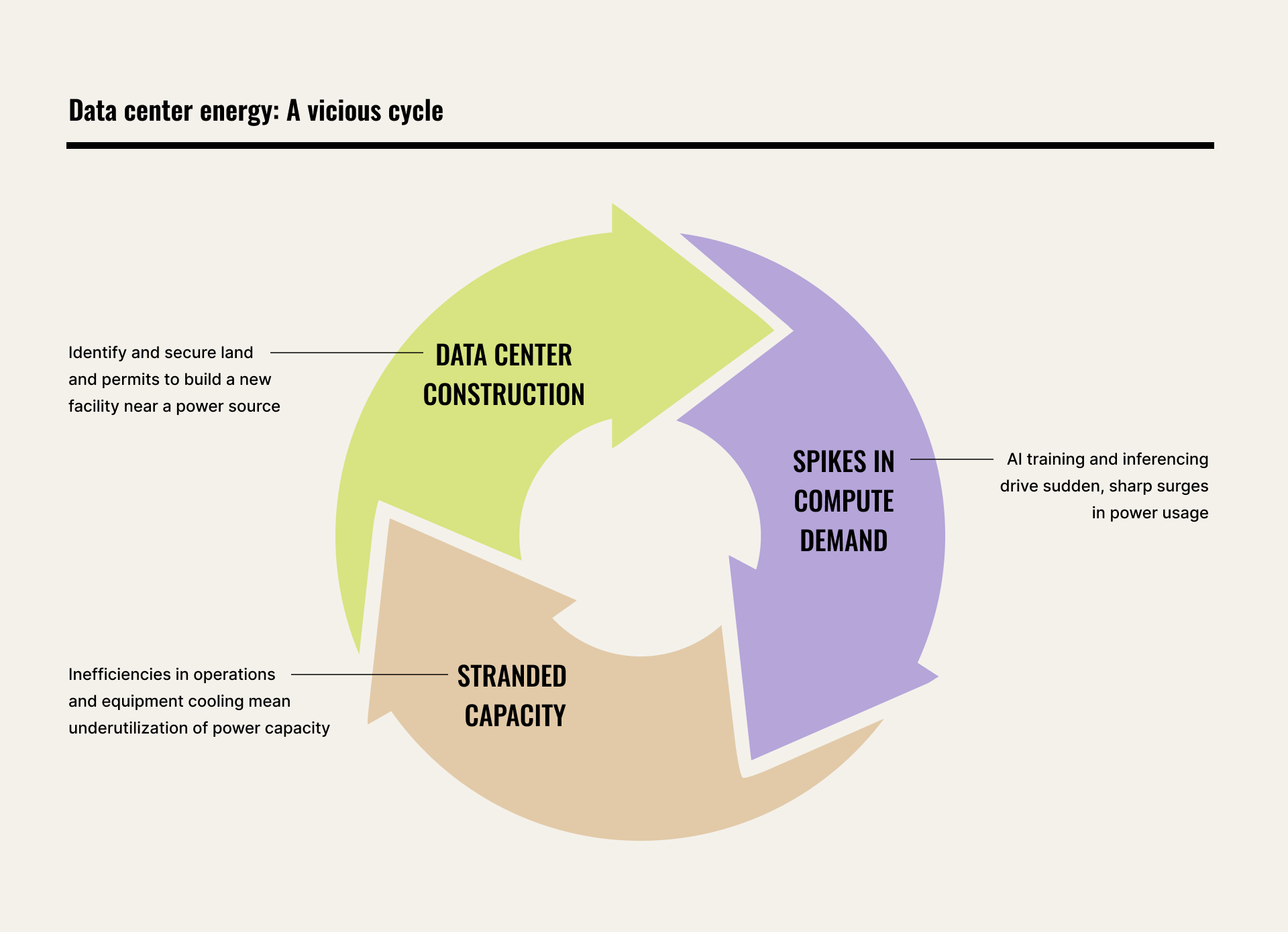

Data centers’ relentless expansion is driven, of course, by the surge in artificial intelligence for three key reasons. First, the chips that power AI require far more electricity than traditional servers. Second, that electricity turns into significant heat, demanding robust cooling systems that consume additional power. And third, AI is proving broadly useful across industries, accelerating adoption and driving sustained demand. As that demand climbs, data centers’ energy consumption is projected to double between now and 2030.

As a product engineer who studies and advises on data center design and operational optimization, this is where I reveal the uncomfortable irony: While the industry scours the globe for new power sources, design and operational inefficiencies leave much of the power already wired into existing and newly built facilities unused.

The utilization gap

Data centers look like giant warehouses filled with racks and racks of computer chips; today that’s usually powerful graphics processing units, or GPUs. Traditionally, the centers are planned around a maximum level of computing power they’re supposed to be able to deliver, at least in theory. In practice, the facilities rarely operate at full tilt due to mismanagement of capacity. For example, maybe they overestimated what peak compute demand would look like. Or perhaps there’s some deficiency in one or more of the elements involved in power consumption and heat management — the fiber optic network, power cables, cooling system, airflow, equipment layout, and more.

Power is not static, and once it’s transformed into heat, removing it is often unintuitive and invisible to the human eye. Managing all of this is like playing Tetris at high speed without that little preview window that shows you which shape is coming next. Each new change — whether a new hardware deployment, an equipment decommission or a new AI inferencing or training workload — is like a new block dropping in awkwardly, altering power and cooling demands in ways that may exacerbate the problem. If your data center suffers from one or more difficult hotspots, you’re hamstrung: You can’t deploy new equipment, performance degrades, and servers will throttle back power usage to avoid irrevocable damage. The result is what we call “stranded capacity” — on average, a staggering 40 to 50 percent of available power that’s left on the table.

Scaled globally, the implications are significant. While the data center world scrambles desperately for available power, there are thousands of data centers worldwide sitting on megawatts or even gigawatts of stranded unused power. If a meaningful share of that built capacity can’t be utilized because of power and cooling capacity mismanagement, the economic and environmental costs are substantial.

The thermal bottleneck

For years, data center rack densities, meaning the power draw per rack, hovered in the five to 10 kilowatt range. Today, a single AI rack commonly draws around 130 kilowatts — enough electricity to power dozens of homes, all packed into a footprint of about eight square feet. In coming years, next-generation systems will push toward 600 kilowatts, or even a megawatt per rack. At those levels, you are no longer cooling a room. You are managing a concentrated heat output like that of a small community.

This density changes everything. It means small design or operational imperfections can cause big problems. If airflow is poorly balanced, if liquid coolant isn’t evenly distributed, if chillers are placed too close together, or if heat rejection units recirculate warm exhaust, usable capacity disappears. The electricity may be available at the meter, but the facility cannot safely convert it into additional computing capacity. The bottleneck is thermal, not electrical.

AI introduces another complication: volatility. These systems don’t ramp up gradually. Demand can jump from near idle to maximum output in milliseconds — and drop just as quickly. Those sharp swings strain cooling, power and control systems that were designed for steadier, more predictable patterns of use.

Simply put, AI has changed the stakes. Cooling is no longer a secondary system — it is the system. Unless we apply the same engineering rigor that other high-stakes industries adopted decades ago, we will keep building new capacity to compensate for the power we already have but cannot safely use.

Model first, build later

The blueprint for modernizing data centers may seem unexpected: aerospace engineering. Decades ago, that industry moved beyond physical trial and error to physics-based simulation, testing design and airflow in software long before an aircraft actually flew. Data centers, by contrast, have often relied on conservative overengineering and operational guesswork — even as AI has driven rack densities and thermal loads to unprecedented levels. That disconnect is becoming harder to justify.

In my role at Cadence, a tech company that uses design and analysis software to help clients futureproof their businesses, I spend my days deep in computational fluid dynamics (CFD), applying the same physics used in aerospace simulations to data centers. CFD allows engineers to see what is otherwise invisible: where hotspots will form, how air and liquid will circulate, and which constraints will strand capacity. This process once took hours, but with modern GPUs, it can now be done in minutes.

Through a digital twin — a live virtual replica of a data center — we can test scenarios that would be reckless in the real world, where a single misstep can trigger outages costing hundreds of thousands or even millions of dollars: shifting high-density workloads, simulating breaker trips, or modeling cooling failures.

The results are measurable.

For example, at one large financial firm, digital twins of several data halls exposed airflow imbalances that were trapping capacity. Instead of leasing more space or shifting workloads to the cloud, the company reworked cooling and layout — avoiding a new build and saving roughly $200 million in capital costs.

In AI-specific environments, the gains can be even more direct. Another client modeled how workloads were distributed across fixed GPU racks, and the simulation showed that shifting applications — not hardware — could increase output by roughly 20 percent without adding new power. At gigawatt scale, that translates into billions in potential revenue. The physics did not change. The visibility did.

From optional to essential

CFD is no longer a slow, for-PhDs-only tool. It’s becoming an embedded, automated layer of day-to-day data center operations. Not an engineering luxury, but rather practical infrastructure. Designing and operating data centers without modeling them first will soon seem obsolete — like putting an airplane in the sky and saying a prayer. We used to do what?

The data center race isn’t slowing down. AI demand will keep rising, and new facilities will continue to come online. But expansion doesn’t have to be the only response. Improving how we use existing capacity, even incrementally, is a game-changer. Across hundreds of gigawatts of installed capacity worldwide, even modest gains in utilization could significantly reduce energy waste, avoid unnecessary infrastructure buildouts, and lower associated emissions. We can extract more compute from the same megawatts, rather than endlessly chasing new ones.

Mark Fenton is Product Engineering Group Director at Cadence, a market-leading technology company that uses AI and digital twins to design and optimize high-performance chips and systems across industries for a more efficient, sustainable future. Learn more at cadence.com.