Here in the United States, one of the greatest assets we have in our country — from a scientific standpoint, at least — is our collection of National Laboratories. A total of 17 labs presently exist, which focus on a wide variety of scientific, engineering, and energy-related endeavors. Many of these labs are places where fundamental science thrives, including:

- Fermilab, SLAC, and Brookhaven, where many fundamental and composite particle physics discoveries have taken place and where new experiments offer a window into fundamental reality,

- Los Alamos National Laboratory, where the first atomic bombs were developed and where both nuclear science and explosives developments continue,

- Argonne National Laboratory and the Frederick National Laboratory for Cancer Research, which have used physics and accelerator technologies to further the biological and biomedical sciences,

- Lawrence Berkeley, Lawrence Livermore, and Oak Ridge National Laboratories, which pioneer new avenues for energy generation, including (at LLNL) the National Ignition Facility, which recently surpassed the breakeven point in nuclear fusion research for the first time ever,

as well as many others, located all across the country. All of these labs, in addition to the fundamental and applied sciences conducted there, also heavily rely on the latest in computational technologies to maximize the amount of quality data that can be collected and analyzed for humanity’s benefit.

At the end of November, 2025, a new endeavor was launched by the Department of Energy, affecting all 17 of our National Laboratories: the Genesis Mission. It promises to revolutionize science by building an integrated, AI-powered platform for discovery. Its proponents are already hailing it as “the world’s most complex and powerful scientific instrument ever built.” But a closer look at what’s being promised reveals an incredible suite of risks and hazards to our country’s, and our planet’s, scientific future. Is this project a new moonshot, in the spirit of the Manhattan and Apollo projects? Or is it the end of American leadership in the realms of fundamental sciences? Here’s what everyone should consider.

If you were to chart out the development of human civilization, you’d find that our success as a species — by metrics such as life expectancy, total human population, and overall quality of life — have dramatically improved in recent millennia. Although some scientific and technological advances are ancient even by those standards, such as the harnessing of fire, the development of art and music, and the earliest domestication of animals, it’s really over the past 12,000 years or so that our civilization’s trajectory has skyrocketed. Advances in:

- agriculture, including farming, herding, food production, and labor-saving tools,

- military power, driven by metalworking, transportation advances, and energy sources,

- commerce and industry, driven by writing, money, record-keeping, and mathematics,

- as well as medicine, storytelling, architecture, water and waste transport, and many other fields,

led to the rise of modern civilization.

Throughout the centuries leading up to and following the industrial revolution, continued investments in fundamental science would lead to new technologies, which in turn led to a better understanding of the problems that faced humanity and better solutions to those problems. The world became richer, freer, and the quality of life that humans living upon this Earth experienced rose hand-in-hand with those advances. The trajectory of human civilization often included setbacks from wars, plagues, famines, and disease, but overall, continued only to advance.

Today, as the end of 2025 now approaches, we have an enormous suite of scientific knowledge to draw on, a strong global workforce filled with a wide variety of scientific and technological expertise, and a tremendous amount of computational power at our disposal. Investing in fundamental science provides the foundation of what we can possibly do, technologies build upon that foundation to bring useful tools and conveniences into existence, and then — ideally — societal forces bring those tools and conveniences into our everyday lives in ways that enhance and improve our lived experiences here on Earth. That’s always been the path, and arguably, is the only successful path, for improving the lives of current and future humans on our planet.

However, it’s also the case that those new technologies that we develop, sometimes directly as part of our scientific investigations, can then be re-applied to helping develop our scientific foundations, taking us to places we could never have gone without them. In recent years, scientific data sets have become so large, and scientific problems have become so far-ranging and so complex, that humans no longer analyze these manually: by hand and/or by eye. Instead, the data is analyzed by computer: initially by algorithms developed and written by humans, but more and more recently, by algorithms that were arrived at through machine learning techniques.

As humans, we can often pick out objects, items, or other “data points” of interest simply by defaulting to what our minds naturally do: recognizing patterns. Whenever we look at something, we make decisions about what to choose based on our experience and our instinct. It makes sense, then, that the more we’ve seen and experienced, the better we’re going to be at recognizing those sought-after patterns when they appear again. In particular, the more relevant, closely-related experience we have, the better we’re going to do.

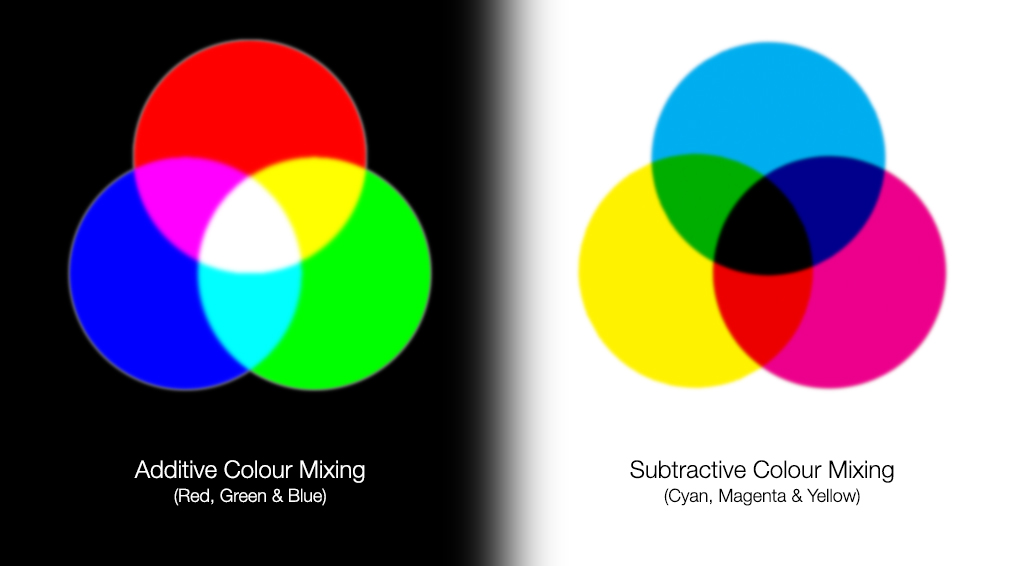

This was a task that was often elusive for computers, up until the late 2010s: when artificial intelligence and machine learning really came into their own. The core of machine learning is to:

- acquire a large amount of high-quality training data,

- to identify patterns that exist within that data (despite not being explicitly programmed to do so),

- and then to recognize something that’s a sufficient mathematical match to something it’s already “seen” previously,

- often classifying and successfully picking out items or objects that even an expert-level human might have passed over.

That is, in an ideal world, how machine learning and artificial intelligence works. For generative AI, such as large language models, there’s just one more step inserted into there: the training data will lead the machine to calculate an “underlying probability distribution” for the data, and then to train itself to sample from the distribution to generate new data that’s similar to the training data, with that “generation” step marking the “generative” part of generative AI.

To be clear, there are many good uses of artificial intelligence and machine learning, just as there are many good uses for computers in general. Among the 17 national labs that we have, most of them are already leveraging advanced computational resources. These include:

- semiconductor developments,

- high-performance computing,

- quantum computers,

- quantum information systems,

- artificial intelligence and machine learning,

and many others. National labs have been a hotbed for the development of supercomputing platforms for several decades now, and there is great hope for our scientific future with the emerging technology of artificial intelligence and machine learning.

If we can successfully integrate these new technologies into the platform for building and enhancing our scientific foundation, it makes sense to think that it can indeed accelerate our scientific developments. According to the new Under Secretary for Science, Darío Gil, in a letter he sent out to all national labs on November 24, 2025,

“It’s undeniable that there is a revolution in computing that is going to transform how science and technology is practiced and how research and development (R&D) is done. This revolution is going to impact every office, every mission, and every National Laboratory – in fact it already has. This revolution will be powered by the combination of “High Performance Computing + AI + quantum” and will usher a new class of supercomputing platforms; platforms that we will pioneer and that we will put to use to solve the most challenging scientific problems of this century and unleash a new age of AI- and quantum-accelerated innovation and discovery.”

This is the ideology at the core of this new endeavor: the Genesis Mission.

The big question, and one that goes unanswered in Gil’s letter (or any statements from the US Government), is all about resource allocation. If this platform is developed responsibly, then what will happen is:

- the computational resources and infrastructure at each national lab will be kept intact,

- but additional resources will be devoted toward networking them together, bringing their data together,

- resulting in the training and assembly of a more powerful, comprehensive AI/ML model,

- that can then be applied to the data sets collected at the labs from their scientific research endeavors,

- which will hopefully result in new insights and potentially even new discoveries.

That’s what the Genesis Mission could look like, under ideal circumstances.

But there are some strong reasons to believe it won’t necessarily look like this. The Genesis Mission, first off, is an ambitious endeavor — and in government-speak, ambitious always equates to expensive. When there are large price tags involved, one always asks, “Where is the money for this going to come from?” Is the Genesis Mission going to be funded atop of the National Laboratory infrastructure that already exists: by a new government investment in American infrastructure? Or are these computational plans going to be funded by diverting funds away from the scientific, engineering, and technological endeavors that originally made these National Laboratories so valuable in the first place?

The modern digital world, as we all know, was predicated on a number of important developments: the recognition that all information could be encoded into a string of 0s and 1s, the development of classical information theory, the invention of the transistor, and the introduction of planar semiconductor manufacturing. These advances brought computers into existence, into our lives, into our homes, and eventually, into nearly everything we do on a daily basis.

According to Gil’s letter, the goal of the Genesis Mission isn’t to simply enhance what we’ve developed by introducing new technologies and improvements to existing technology; it’s to wholly reinvent computing through AI and quantum computation. Gil highlights (bold his) that,

“The Genesis Mission will have as a national goal to accelerate the AI and quantum computing revolution and to double the productivity and impact of American science and engineering within a decade (and in half that time across our National Laboratory complex).”

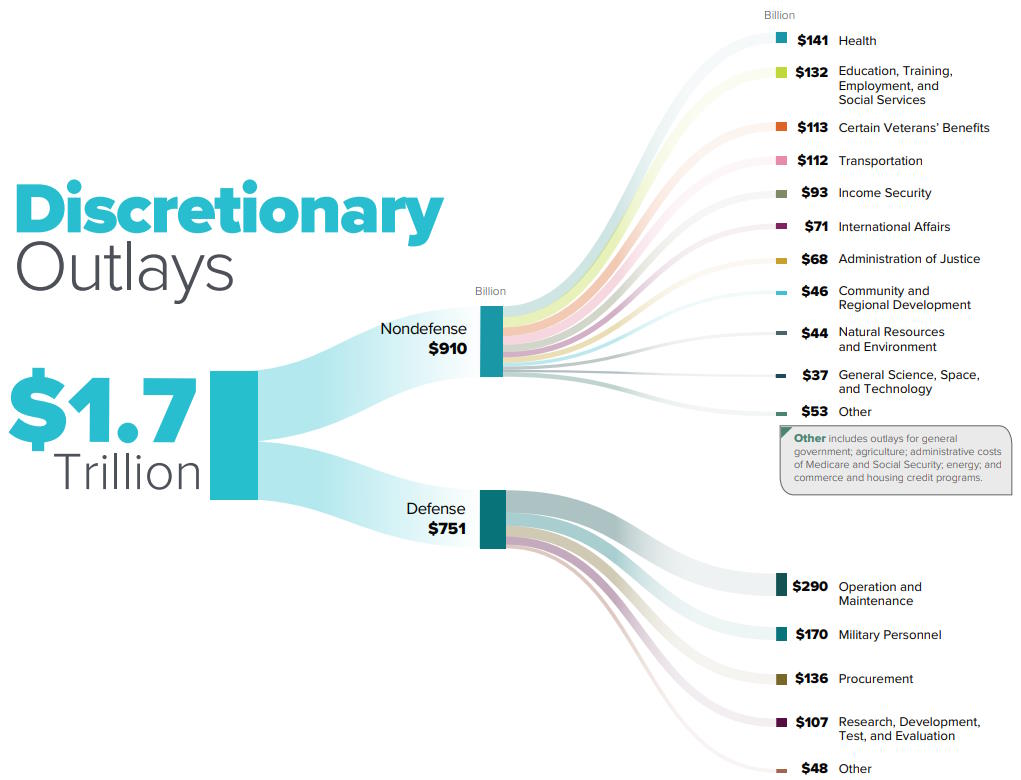

All of which leads up to the big reveal: of the cost of this project. Gil notes that private-sector-led AI supercomputers were built with price tags in the tens of billions of dollars. The figure that Gil then quotes for the aggregate computing and data center investments planned for the next five years, as the Genesis Mission intends, should exceed $2 trillion in the United States alone. For comparison, the annual budget across the entire Department of Energy was $51 billion, or $0.051 trillion, for the 2025 fiscal year, and the 2026 budget projects a decrease from 2025 of about 10%.

That’s asking for an enormous amount of additional resources; even if the entire Department of Energy budget were redirected toward the Genesis Mission, that would only provide about 12% of the necessary budget over the next 5 years: ~$250 billion out of a needed $2 trillion. It’s clear, just from some basic math, that additional resources will be needed, over and above what the Department of Energy will provide. Gil alludes to this in his letter, noting that the private sector, Universities, and philanthropic research institutions represent the majority of total research and development funding, not federal funding.

Think about this for a moment. On the one hand, the project of the Genesis Mission purports that building a platform to accelerate scientific discovery is its main goal. But then they talk about a price tag that’s more than an order of magnitude greater than the cost of all the science funded by the government, while insisting that the private sector will need to be engaged. And while there certainly is good work being done on both the artificial intelligence/machine learning fronts as well as the quantum computing front, these two nascent industries are also rife with hype: where industry leaders are making claims that are wholly counterfactual to reality.

In the real world, we have a name for that: grift.

There are plenty of great things that artificial intelligence can do for science. It can:

- find additional exoplanets and even exomoons in transit and large survey data,

- find galaxies, black holes, tidal disruption candidates, variable stars, and other astronomical oddballs,

- enhance the success rates of biometric and fingerprint/facial recognition software,

- identify candidates for anomalous events or unusual configurations in collider physics tracks, particle detection experiments, chemical synthesis, or protein folding,

and much more. However, artificial intelligence, particularly when you use generative AI, can also “hallucinate,” introducing unnecessary and often detrimental errors, whereas eschewing its use would have resulted in an error-free computational result. In cases where we lack the necessary, relevant training data, artificial intelligence methods will result in you getting a confident answer to your query, but that answer may have little-to-nothing to do with reality.

Similarly, there are indeed excellent use cases for quantum computation: wherever quantum advantage can be achieved. Unfortunately, quantum advantage requires a problem that:

- can be solved by today’s quantum computers,

- in a far more efficient fashion than traditional classical computers can solve it,

- where the problem itself is also relevant and useful, rather than just a demonstration of potential,

- because it takes advantage of an inherently quantum process, such as entanglement, indeterminism, or time crystals, for example.

Quantum computation is certainly fascinating, but there are no proposed scenarios where quantum advantage is expected to be achieved for a useful problem without several revolutions in the number of simultaneously superconducting qubits, coherence timescales, quantum error-correction, and along many other fronts.

If the dream is that we’ll make this investment and accelerate scientific discovery, then the nightmare is that we’ll use the Genesis Mission as an excuse to further shift our national priorities from things like:

- foundational and fundamental science,

- broad education from children and adults,

- and infrastructure that exists for the common good,

and instead use it to enrich those at the helm of the AI and quantum computing industries. Here in 2025, this has already happened, with devastating effects, to science at NASA, science at the National Science Foundation, and to the entirety of the Department of Education, among many others.

The fact that the government has already dissolved HEPAP (which it did less than 24 hours before the October 1st, 2025 government shutdown began), or the High Energy Physics Advisory Panel, which has guided science at the National Laboratories since 1967, leads many in the field to suspect that this is yet another nightmare for science come to life. As one scientist, on condition of anonymity, said to me, “We may be Wile E. Coyote, six feet off the cliff edge, but we are still running!”

If we maintain funding for the foundational, civilization-enhancing research that is the hallmark of fundamental science, we can use whatever technological developments ensue to enhance and accelerate new discoveries: exactly the stated intent of the Genesis Mission. However, 2025 has been a year where federal research has been gutted from start to end, and many scientists are already planning on jumping ship for other countries that haven’t sought to replace bona fide scientific inquiry with promises that simply are incongruent with reality.

While some are indeed hoping that the Genesis Mission indeed turns out to be the “moonshot” of 21st century science, a great many scientists — both in the USA and abroad — see only the madness of an administration intent on stripping the public good out of every avenue of government, instead replacing it with grift and sycophancy. Just as the NSF’s “strategic alignment of resources in a constrained fiscal environment” from earlier this year was code for gutting the country’s flagship science facilities, people should be right to worry that the scientific legacy of our National Laboratories are next: to be sacrificed on the altar of political gamesmanship. The words of Darío Gil, the Under Secretary for Science and Genesis Mission Director, speak for themselves:

“The one thing we don’t have is time. We are going to act with an urgency that will feel deeply uncomfortable. The urgency is driven by the rate and pace of the computing revolution and by… our most formidable competitor and adversary, China. This is a race we must and will win. In the original Manhattan Project, the existential threat of the war provided the winning combination of context and urgency… Scientific and engineering advancements in technologies like AI, quantum, fusion, and biotechnology will define the future of our country and of the entire world. We must remember that science, engineering and technology have become the new currency of strategic power. Let’s quiet all the external noise and other distractions, and let’s act like our lives depend on our execution (because they do).”