This article is part of Big Think’s monthly issue The Energy Transition.

Planning commission meetings in Joliet, Illinois, aren’t typically raucous affairs. The one on March 5, however, was buzzing and standing-room-only.

Hundreds of residents crammed into City Hall, filling multiple overflow rooms. Most were waiting for a chance to voice their opinion on a proposal to site a $20 billion AI data center — the largest in the state — on 795 acres of farmland on Joliet’s east side.

“These are mega-rich people who are not here to do charitable things,” said lifelong resident Isabel Gloria. “They don’t love Joliet. I’m here because I love Joliet, and I don’t want to see my utilities go up.”

While many who stepped up to the microphone spoke in favor of the proposed data center, touting the economic and tax benefits it would provide, proponents were clearly in the minority. Expressing concerns about rising electricity rates, water shortages, and uncaring tech oligarchs, most attendees were resolutely opposed.

The commission advanced the plan regardless.

Across the U.S., nebulous worries over AI taking jobs and raising electricity rates have crystallized into a clear goal: stop data centers from being built.

What happened in Joliet is playing out across the country, in municipalities both big and small, as tech companies race to erect gargantuan complexes filled with thousands of graphics processing units (GPUs) to provide the compute demanded by artificial intelligence.

The swift ascent of large language models — AI systems capable of performing a growing range of white-collar tasks — has caught many off guard. For tens of thousands of working Americans, planning commission meetings may seem like the only setting where they can meaningfully slow what appears to be an ominous wave. They can register their displeasure with the faraway technologists who not only seek to replace them with AI agents and robots, but also siphon drinking water and raise electricity rates at the same time.

Nebulous worries over AI crystallize into a clear goal: stop data centers from being built. But while AI data centers may or may not play a role in future job losses, they don’t have to increase electricity prices. In fact, if constructed with wise policies in place and smart technologies in use, they can actually drive the costs of electricity down.

The evolution of data centers

Data centers are facilities that house IT infrastructure — everything that happens on the internet or “in the cloud” relies on equipment at data centers. Google’s first data center — established in the late 1990s — consisted of 30 servers stacked in a 28-square-foot cage situated in a shared warehouse. eBay’s servers were in the cage next door. Google’s monthly hosting fees came to $8,850.

Those nascent data centers were positively puny compared to today’s behemoths.

A single data center can require as much power as a small city. In the U.S., the facilities already consume 5% of the nation’s electricity, and new ones are popping up everywhere.

A modern data center can cover hundreds of thousands of square feet and house tens or even hundreds of thousands of GPUs. These centers can cost tens of billions of dollars to build and consume hundreds of megawatts of power.

The U.S.’s data centers are already collectively consuming 5% of the nation’s electricity, and new ones are popping up everywhere — in 2025, Axios estimated that nearly 3,000 were under construction or planned across the U.S. This processing power rush is expected to continue for the foreseeable future, potentially driving data centers’ proportion of U.S. electricity demand to 17% by 2030.

Between 2020 and February 2026, residential electricity prices increased by more than 36% in the U.S. — but what seems like an obvious case of demand-driven price increases may not be.

It is this unprecedented electricity requirement that attracts the most ire from locals when new AI data centers are proposed.

Each one can require as much power as a small city, and Americans blame their higher electricity bills on this skyrocketing demand — between 2020 and February 2026, residential electricity prices increased by more than 36% nationwide, according to the U.S. Energy Information Administration.

Politicians have jumped on the blame bandwagon as well. “Data centers’ energy usage has caused residential electricity bills to skyrocket,” Senate Democrats wrote in a letter sent to utilities, accusing them of passing the data center-driven costs of pricey grid upgrades on to their customers. In March, influential Sen. Bernie Sanders even called for a moratorium on data center construction.

But what seems like an obvious case of demand-driven price increases may not be. In fact, the opposite might actually be closer to the truth.

Inside an AI data center

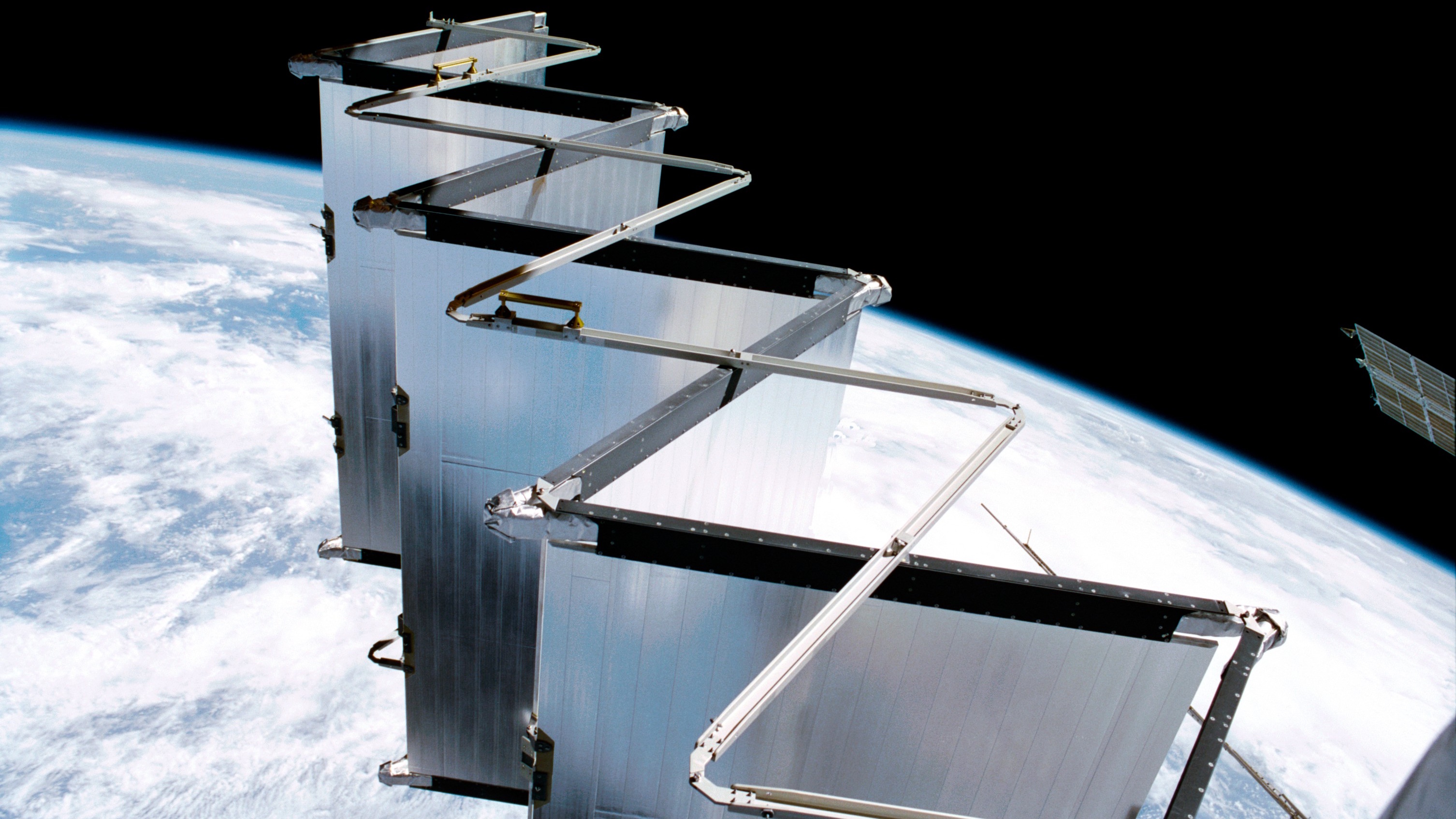

The electricity used by most data centers is generated at the same power plants that supply homes. From there, electrons flow through high-voltage transmission wires, into substations, and then to the data center itself. Electricity inside an AI data center follows a fascinating path.

First, it is received by service entrance switchgear. It then moves on to transformers that step it down to low voltage. From there, power traverses wires to fossil fuel-based generators — these provide temporary backup electricity during utility outages. Next in the chain are uninterruptible power supply (UPS) modules, battery systems that provide instantaneous electricity for the several seconds it takes the generators to start up. From the UPS systems, electricity then flows to distribution switchgear.

Power distribution units then provide power to groups of server racks. Remote power panels are next in line, distributing power to smaller sets of racks. Last in the power chain are rack power distribution units, which pass electricity along to the GPU-containing servers themselves.

Server racks that once consumed perhaps 15 kilowatts of power now eat up five or even 10 times that amount.

Where electricity delivery ends — at the power-hungry servers — another energy-demanding task begins: heat management.

At the GPUs, electrical energy is converted to thermal energy, which must be removed to prevent damage to valuable components. Traditionally, data centers cooled servers by circulating chilled air. But in the era of AI data centers, this solution is increasingly unworkable. Racks that once consumed perhaps 15 kilowatts of power now eat up five or even 10 times that amount, generating more heat in the process. To prevent damage from these high temperatures, data center operators increasingly pipe fluid directly around computer chips. They’re even considering immersing them entirely in a heat-conducting fluid.

Rack power could explode even further, making more heat for operators to combat. “We’re thinking about megawatt racks,” Kannan Soundarapandian, vice president and general manager of high voltage products at Texas Instruments, said in March 2025. “The insanity of having a megawatt of power being consumed in the space of something that looks like a refrigerator you cannot overstate.”

A mammoth misconception

If data centers are resource-sucking, burning-hot leviathans, then Northern Virginia should be a hellscape. The region is the data center capital of the world, enabling an estimated 70% of global internet traffic — and capable of drawing close to 5,000 megawatts of power. More broadly, data centers account for 26% of electricity demand in the state. Surely then, electricity costs for the average Virginia resident must be sky-high.

They are not. Publicly available data reveals that Virginia’s average residential electricity rate is 9% below the national average (16.43¢/kWh versus 18.05¢/kWh). Commercial rates, meanwhile, are 31% below the national average (9.73¢/kWh versus 14.12¢/kWh).

Though counterintuitive, data centers have historically reduced electricity costs for Americans.

“Most people think that when demand goes up, prices will automatically go up. That is the case with most markets, but electricity is different,” Ian Magruder, executive director of Utilize, an industry-led group focused on increasing power grid efficiency, tells Big Think. “Because of all the fixed costs, all of us who pay into the grid have already paid for a lot of that infrastructure. If we can push more power through the grid we have, we will put significant downward pressure on rates.”

A data center’s energy demand is large and consistent. Adding one to the grid can lead to relatively smaller demand spikes, which allows the grid to operate more efficiently — lowering costs for everyone.

For power to be available any time you microwave leftovers, charge an EV, or flip a light switch, the grid has to produce and carry just as much electricity as what customers collectively request — differences between supply and demand can cause problems, such as outages. But demand can be inconsistent, and many power plants, particularly coal and nuclear, can’t simply start or stop generating power at a moment’s notice. Moreover, wind and solar power are not always available when needed.

To ensure they can meet peak demand, utilities typically build more power plants than necessary. As a result, America’s utilities operate at an average load factor — the ratio of demand to peak capacity — of just 53%, according to a recent analysis from Duke University.

“It’s like building an airplane that only flies with full passengers a few times a year,” says Magruder. “If you can bring more load onto the system, without having to significantly increase infrastructure, then rates will decrease.”

For every 1,000 megawatts of new electric demand from data centers, PG&E says its other electric customers may save between 1% and 2% on their monthly bill in the long term.

That’s where data centers can help. They ask for consistent, large amounts of power around the clock. Demand spikes become relatively smaller compared to demand overall, which allows the grid to operate more efficiently, lowering costs for everyone. A study recently published by The Brattle Group forecasts that a 10% increase in grid utilization will save Americans more than $100 billion on their utility bills over the next decade. PG&E has stated that for every 1,000 megawatts of new electric demand from data centers, its other electric customers may save between 1% and 2% on their monthly bill in the long term.

This benefit isn’t merely hypothetical. In February, Charles River Associates published an analysis showing that data center buildouts did not trigger increases in retail utility rates over the past decade. Researchers from Lawrence Berkeley National Laboratory and The Brattle Group reported last October that over the past five years, states with the greatest load growth saw retail electricity prices decline in real terms. However, they also urged caution: “Higher growth can increase prices if supply and delivery infrastructure is constrained and costly — as is currently the case in many states.”

So, will the present data center buildout be different? Rather than keeping electricity costs in check, as data centers have done in the past, will they instead drive them to explode?

Good grid citizens

In February, Greg Upton, executive director at Louisiana State University’s Center for Energy Studies, told NPR’s The Indicator from Planet Money podcast that one of three scenarios could play out. In the first, demand growth exceeds the capacity added to the grid, increasing costs. In the second, supply balloons while demand languishes, which could happen if, for example, the AI bubble pops. This would also increase costs. But in the third “Goldilocks” scenario, data centers’ consistent demand and newer, cheaper forms of power come online in lockstep. The grid thus grows more efficient, making rates decrease for all.

Utilize is working to make this happen. Backed by various entities, including Google, Tesla, and Carrier, the coalition aims to get legislation passed at the state level to mandate that utilities measure grid utilization and incorporate it into their planning processes. Virginia recently became the first state to do so.

“We think that’s a really exciting milestone and could be a model for other states,” says Magruder, adding that bills like Virginia’s incentivize various methods of boosting grid efficiency. “Measuring, managing, and incentivizing utilization can help create the demand for all these solutions.”

Data centers can ease strain on the grid — not just by using less power, but by using it smarter.

The lowest hanging fruit of these solutions is increasing energy efficiency inside data centers themselves. Advanced power electronics, direct-current architectures, and novel cooling methods (which can also reduce water demand) are a few recent advances ready for use, and data centers have historically embraced new efficiency technologies. “Between 2015 and 2022, worldwide data center energy consumption rose an estimated 20% to 70%, but data center workloads rose by 340%, and internet traffic increased by 600%,” Brian Potter, senior infrastructure fellow at the Institute for Progress, wrote in 2024.

The next solution is implementing on-site power production and energy storage. Hyperscalers can build their own solar farms, natural gas turbines, fuel cell servers, or battery storage systems on site, reducing load placed on the grid. Many data center companies are already choosing to do this, but policymakers and regulators can also require or incentivize it by reducing lengthy grid connection times for centers that incorporate on-site power.

Another key solution is demand flexibility, dialing back power use at select times or in certain locations. Data centers can accomplish this by having groups of server racks draw power from on-site battery storage rather than the grid for a few hours when system-wide electricity demand is high. If practical, data centers could also temporarily throttle power to their servers and cooling systems in exchange for monetary incentives from their utility.

Data center owners could be “good grid citizens” and help fund upgrades to prevent utilities from shifting costs for new power generation to ratepayers.

Duke University energy researchers Martin T. Ross and Jackson Ewing published a report in February finding that flexibility can reduce system costs by tens of billions of dollars over the next decade. They are optimistic that data center operators will be on board. “The hyperscalers recognize that, if they want speed to power, flexibility is a path to help with that — and if they want to maintain a social contract to avoid NIMBYism,” Ewing tells Big Think. “There are enough forces pushing towards flexibility that I think it’s feasible. Yes, it’s aspirational, but it’s not purely academic. Real investments are being made.”

That’s good news for grid stability and for electricity costs, but Ewing noted a very real downside. “I think it’s enormously important what happens with modular natural gas generation for these data centers,” he says, pointing to a new report revealing the massive scale of off-grid gas power plants that data center operators are building. “It’s an attractive solution, but it’s a nightmare for emissions and local air pollution. Will it be a stopgap … or is it the way of doing business indefinitely?”

Rather than build their own power plants, data center owners could instead “fully or substantially fund the new generation, transmission, and other upgrades needed to serve them,” analysts with Charles River Associates wrote in February. Such policies would “ensure that utilities can recover their costs, including a rate of return, from the infrastructure they own and operate to serve new large loads.” This would prevent utilities from shifting costs for new power generation and grid upgrades to ratepayers.

If we’re being honest with ourselves, we’ve neglected to make needed upgrades to America’s power grid. The rapid growth of AI data centers is simply forcing us to reckon with many years of indolence.

Magruder says there’s an opportunity for data centers to be “good grid citizens.” Potter agrees and is optimistic that centers will take it. “The hyperscalers are increasingly on board with paying for any necessary grid and generation upgrades,” he says. “The real problem is that we’ve spent several decades without increasing electricity generation capacity in the U.S. and have lost the muscle memory for it.”

If we’re being honest with ourselves, we’ve neglected to make needed upgrades to America’s power grid. The rapid growth of AI data centers is simply forcing us to reckon with many years of indolence. Around 70% of power lines in the U.S. were built more than 25 years ago, and many date back to the 1970s. Due to energy efficiency improvements — chiefly from LED lighting and offshoring manufacturing to China — electricity demand remained relatively flat between the mid-2000s and early 2020s, a big break from past trends.

Yet, instead of taking advantage of this lull to prepare for the future by upgrading transmission lines and incorporating smart grid technologies en masse, policymakers procrastinated. We’re now forced — on the fly — to do the work we should have spaced out over a decade.

The challenge is surmountable, but understandably frustrating. Residents of Joliet are right to express concern. Their activism is helping to ensure that interests align behind a win-win future, where we can have artificial intelligence and affordable electricity, too.