As artificial intelligence has become a mainstream and ubiquitous tool for hundreds of millions or even billions of people around the world — specifically, through chatbots and large language models (LLMs) like Claude, Gemini, ChatGPT, Llama, and many others — it’s both enabled new pathways for problem solving and also led to new pitfalls for students, early career professionals, and non-experts seeking to mimic the illusion of expertise. While many who create, sell, or promote LLMs laud their use cases, a significant worry has arisen among students, teachers, professors, and education researchers: that students are not using these artificial tools to enhance their learning, but rather to replace it, outsourcing the hard and rewarding work of critical thought to these LLMs simply by prompt-hacking them.

To be certain, this is a real problem, and a real new way that students (as well as many others) are using the tools at their disposal to complete their assigned work, with the consequence of short-cutting their own intellectual development. But how big of a problem is this really, and who does it impact the most? That’s what physics graduate student Cameron Bishop wants to know, asking:

“As a student in physics now entering my 3rd year of [graduate studies], have you written about the impact of these LLMs/chatbots on students?”

As always, there’s a lot to unpack here, but it’s not all “doom and gloom” as some might fear. Let’s go through the impacts of these new technologies, but with a view to how students historically have cheated themselves as well, to put things in their proper context.

When it comes to learning, whether in a scholastic environment or otherwise, the goal — as so many misunderstand — is not for the student to get the right answer, or to come up with an acceptable answer, to whatever question they’re prompted with. The goal is for the student to gain an understanding of the material: understanding that is a further step along their journey toward mastery of a subject. Whether that’s reading comprehension, a foreign language, wrapping your head around a historical event, mathematical fluency, or the ability to solve scientific problems isn’t particularly relevant. The goal is one and the same: to become “good” at whatever you’re working toward learning.

While every subject is different, and every student has different strengths, weaknesses, and talents from one another, the method for becoming good at all of these is very similar: you have to work at it. You must invest:

- time,

- energy,

- and effort,

into a wide variety of aspects of the subject. You have to read, study, solve problems, familiarize yourself with the material, and think about it deeply and repeatedly, and to do so over long periods of time. You have to make a sustained effort, and to not get discouraged by setbacks. But most importantly, you have to grow your mind.

When you think about the professionals who are not just good, but experts, in any field you can study, what might stand out to you is how different they are from one another. Not just different in how they look, in their educational backgrounds, and in the particular topics that they research and work on, but different in terms of how they fundamentally think about their subject and how they approach problems or puzzles that they encounter within it.

“How,” the student often wonders, “can these multiple experts, all of whom are so good at what they do and what I’m trying to get good at, have such fundamentally different ways of making sense of this complex subject?”

And what the student doesn’t understand — in fact, what they couldn’t possibly understand until they themselves have acquired that necessary expertise for themselves — is how one develops that expertise. Sure, there are many steps that everyone has to go through:

- you have to take the same foundational courses,

- you have to learn the vocabulary and jargon of your field, becoming fluent in it,

- you have to learn how to solve certain classes of problems adeptly,

- and you have to accrue experience by working through a variety of problem-solving examples,

and those experiences are similar for everyone. But what one student takes away from that versus another is going to be very different.

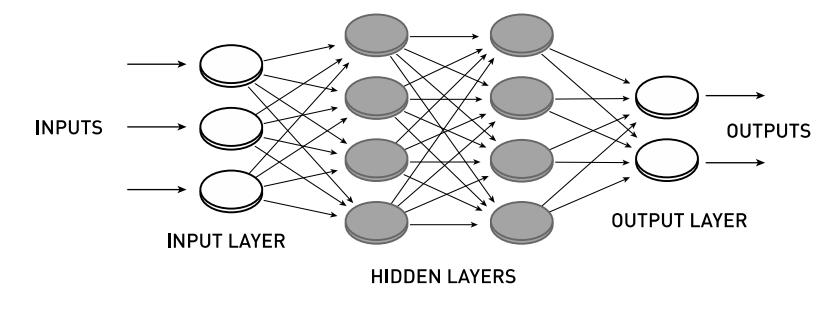

That’s because, going back to a young age, we all started developing different “toolkits” in our minds as far as problem-solving was concerned. The more we further our education, and the more classes of problems we become capable of solving, the more we’ve enhanced those mental toolkits by adding new and more powerful machinery to it. And very often, just as is the case when you encounter a problem that requires tools to solve, there’s more than one approach that will lead you to the correct solution; there are often multiple, equally valid ways to approach whatever problem or puzzle you’re facing.

The key is to ensure that you, with the toolkit you have, can:

- understand the problem you’re facing,

- can set up a method for approaching, learning about, and making sense of the problem,

- can identify at least one candidate method for solving that problem with only the tools that you have in your toolkit,

- and then can adeptly use those tools to attack the problem,

where, if you do every step correctly, you’ll arrive at a valid, viable solution. Even though it may be possible to take the same problem to many different experts and to have each expert offer a different potential solution, each one of those experts is likely to come up with a valid, successful approach to that puzzle.

What often separates people who “make it” in a challenging academic field from those who don’t is their willingness to put in the hard work necessary to grow their minds in general, and their mental toolkits in particular, in the necessary ways that enable them to devise those solutions. It’s easy to see how a lack of hard work, or an unwillingness to put in the effort, can derail any attempts to grow your mind in exactly those necessary fashions.

- If you don’t spend appropriate time with the vocabulary you need to know to give words to your ideas, you’ll never learn how to communicate in that foreign language you’re attempting to learn.

- If you don’t read the historical or literary texts you’re supposed to be examining for yourself, you’ll be restricted to only knowing about the criticisms and summaries of others, rather than having gleaned the ability to comprehensively analyze those primary sources for yourself.

- And if you don’t practice the mathematical problem-solving skills or the scientific skills associated with conducting laboratory experiments, you won’t be able to become adept at those efforts, which come along with their own type of “fluency” just like any language or literary endeavor does.

Most of us, unfortunately, are never told how to learn; we’re given problems to solve and homework to complete and texts to read and reports to write, and it’s sort of tacitly expected that by completing that work, we’ll gain the skills we need to take steps toward that expertise ourselves.

But there’s a surefire way to undermine that traditional path toward gaining that expertise, and it’s a path that students have been taking for as long as teachers have been teaching and giving assignments: to cheat. When students cheat, we often blame:

- the tools at their disposal,

- the technologies that enable those tools,

- or the students themselves, for doing the cheating,

but we never bother to point a finger at the culprit that makes this all possible: an educational system that rewards whether the turned-in assignment or assessment meets a certain standard of competence. We use the metric of “performance on those assignments and assessments” as a proxy to gauge the student’s competence and capabilities, and those are not necessarily the same thing.

It’s often possible — and often, it’s much easier — to complete an assignment without doing the hard work ourselves, and instead to take such a short-cut. After all, if you view the goal of education as obtaining a high grade point average and completing all of your assignments and courses, that’s probably what you’re going to do: optimize your time and efforts to maximize your grades. It’s probably a lot less important to you that you grow your mind in a way that develops your expertise and expands your problem-solving toolkit if that’s not what you’re actively being assessed on.

In educational environments with large numbers of students and little personalized attention, students who focus on mental growth and the acquisition of novel and superior problem-solving skills are the exception, not the norm. How could it be, when there’s so little that appears to incentivize that approach? That, itself, is not a new problem that’s come along with the rise of artificial intelligence and LLMs, as the type of “cheating” that’s so common now goes back long before the rise of artificial intelligence.

- On mathematics assignments, you could work out the math by hand, or you could use a calculator (or a more complex tool for calculational problem solving, such as MatLab, Maple, Mathematica, etc.) to work out the math for you; once you turned in the finished product, no one would know the difference.

- On foreign language assignments, particularly at the college level, we were often given take-home tests, and were instructed not to use dictionaries or other notes. Some of us did the assignment as intended; others of us used those forbidden materials anyway.

- And even when I myself was in graduate school for physics, some of us struggled with the homework problems we were assigned — working until we either got the solution correctly, fooled ourselves into thinking that our incorrect solutions were correct, or got as far as we could before turning in our incomplete work — while others among us searched the internet until we found written-up and posted solutions to the problems we were attempting to solve, and then simply plagiarized and/or copied someone else’s hard work.

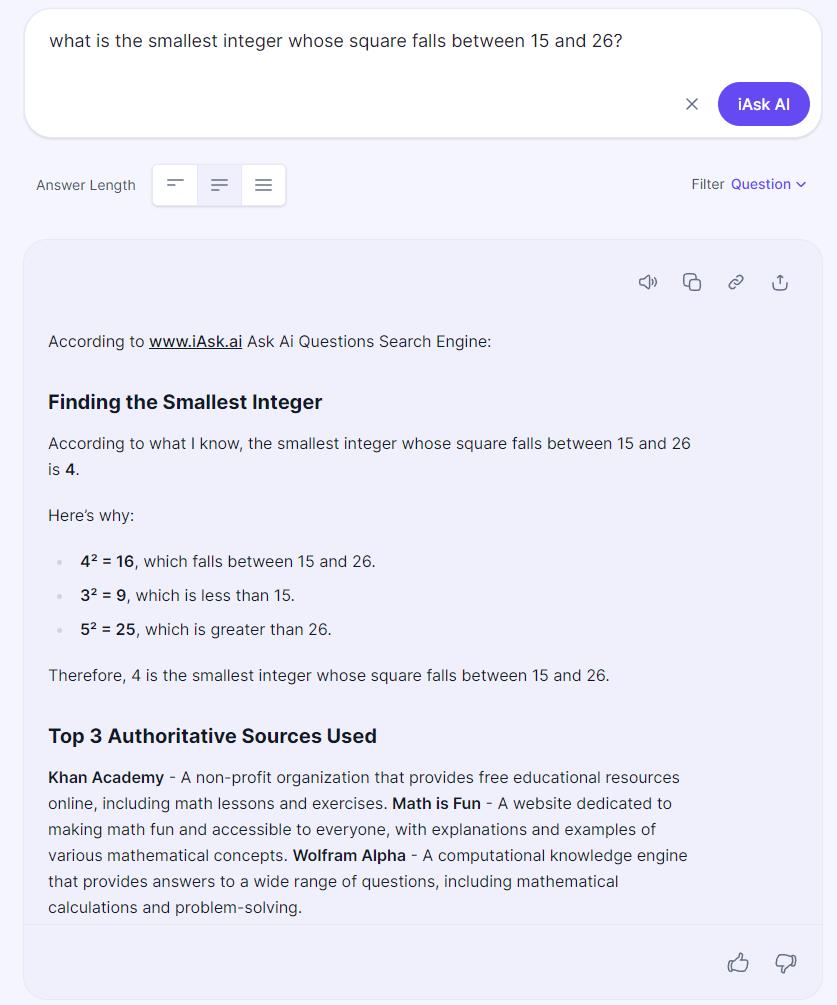

The only difference is that now, with LLMs being so ubiquitous, it’s easier than ever to cheat. Simply prompt an LLM with enough information, and it will produce for you a comprehensive document or manuscript that claims to solve whatever problem you throw at it.

The consequences for these behaviors, which are widespread among modern students in many countries across the world, are far-reaching and severe.

- Literacy and math skills, as tested among high school and college students, have plummeted in recent years, as there are more and more ways for students to complete their assignments without doing the necessary work.

- About 2/3rds of all students admit openly to cheating, with a large fraction of those students admitting to using LLMs to do their assignments, either in part or wholly, in defiance of the assigned instructions.

- Many teachers, including even professors at the college level, are now using AI to grade, preventing even the honest students from getting the feedback necessary to improve their learning.

Of course, there are many other negative consequences that arise as we outsource the hard, critical thinking work — where students struggle with the material initially, as all novices do when they attempt to improve at an endeavor they’re new to — to any external source beyond our own minds. The biggest one is this: if you want to become good at something, like expert-level good at it, you have to work at it until you gain competence, add new tools to your toolkit, eventually gain mastery of that material, and then can apply that mastery (and those tools) to new situations and puzzles where they’ll be relevant.

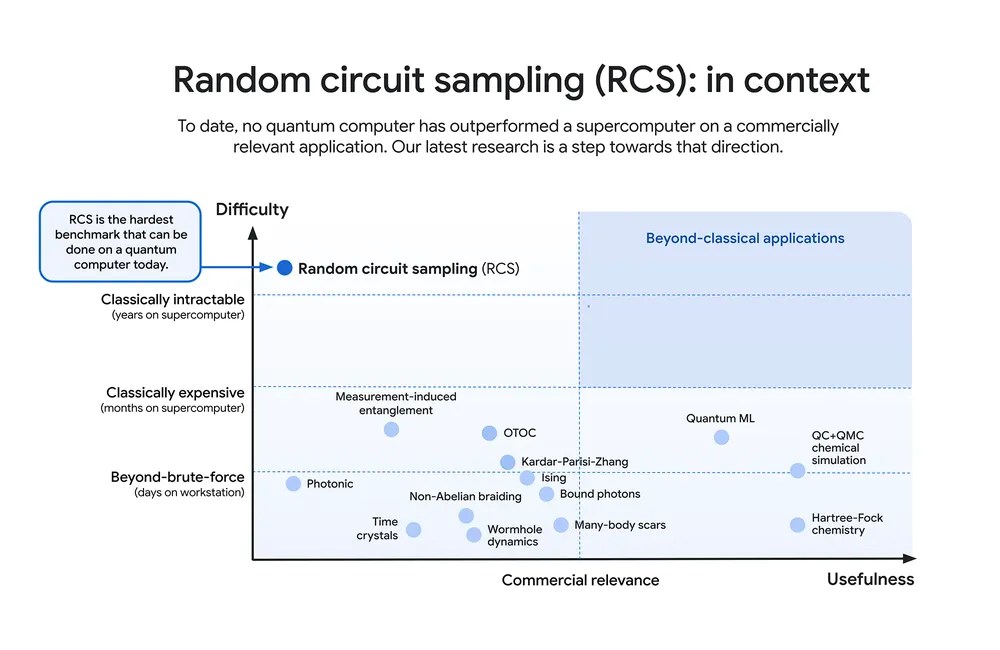

The big danger with AI in the form of LLMs isn’t that they’ll replace or automate various tasks where there are actually good uses for them; this type of machine learning has many important applications, including in science and beyond, particularly for dealing with large data sets and searching for rare objects or events within them. The big danger is that:

- many of us will consult an LLM for a problem (or with a prompt) that it is not particularly well-suited to answer,

- that the machine will return a long, complicated answer that, to the non-expert’s eye, looks plausible and feasible,

- that no one will catch that this is complete nonsense, filled with mistakes and misinformation,

- and that this nonsensical answer will replace what formerly would have been a correct, valid solution arrived at by someone with expert-level knowledge.

That is the danger: that bona fide expertise will become rarer and harder to come by, because there is a critical mass of people involved in the educational system that don’t actually care about student learning, expertise, and mastery, including (but not limited to) many of the students themselves. The big danger is that we will buy into the false narrative that these LLMs can do things that they can’t, which is a dangerous myth that has led AI to be deployed, already, in many circumstances where it is a woefully insufficient tool.

There is not going to be a solution to “the big problem” anytime soon, as it would require an overhaul of how education is conducted and valued, overall, in predominantly English-speaking countries at least. However, for an individual student who wants to be successful in this modern environment — who wants to become a bona fide expert themselves — there is still a path forward, albeit one that will take a lot of courage to follow.

- Focus your efforts on skill acquisition, not on grades.

- Spend time and effort solving problems; eschew the short-cuts.

- Do the reading before class, take notes in class, look over your notes and work through sample problems after class, and only after that, attempt your homework.

- Grow your expertise, and do that by seeking out problems (both old and new) to struggle with; embrace the struggle, and celebrate when you make progress.

- And most importantly, don’t do this work in a vacuum; seek out real people, with real expertise themselves, to mentor and guide you along your way.

There is this idea that all things, even science itself, are tending toward automation: where machines will be at the helm of every step of a process. Humans will be relegated, under this framework, to at best an advisory role, and at worst, to a redundant and unnecessary role. If you yourself, however, actually acquire bona fide expertise, you’ll at least be able to tell whether the LLMs (or whatever form of AI comes to exist) are doing things in a viable way or not. Without that expertise, however, you’ll figuratively become a slave to a “black box” machine, unable to separate fact from fiction for yourself, and completely reliant on a technology that you do not understand.

There are a lot of things that can be stripped away from someone: wealth, power, resources, health, and more. But what’s in your mind is forever yours. Don’t shortchange yourself by failing to take advantage of the opportunities that abound to grow your mind and your mental toolkit. Throughout your entire life, it will always be right there at your disposal; a concrete example of how hard work pays off.

Send in your Ask Ethan questions to startswithabang at gmail dot com!