This article is part of Big Think’s monthly issue The Roots of Resilience.

AI tools like RFdiffusion have made protein design dramatically easier, cheaper, and faster. This is accelerating vaccine development, opening new paths for treating genetic diseases, and making science more accessible — labs that couldn’t afford to work on certain problems before now can. Those are real gains. They’re also new kinds of exposure. The same tools that speed up vaccine development can be used to accelerate pathogen development. The same accessibility that lets a small lab design a cure lets a different small lab, or a single determined individual, design a threat.

We’ve encountered this paradox before, with CRISPR gene editing, gain-of-function research, and other technologies. Each follows the same pattern: A powerful new capability emerges, the benefits are real, the failure modes are real, and the two scale together. So far, on balance, we’ve come out ahead. The cures have outpaced the harms. The systems have held. But surviving a stress test is different from being designed to absorb shocks and recover.

The prize of technological innovation is a world where major problems get solved faster. But when a powerful capability spreads widely, mistakes propagate further and misuse gets cheaper. Once tools are widely adopted, the systems we’ve built around them can become surprisingly difficult to revamp.

That doesn’t mean technological progress is the enemy. It means progress creates a design requirement: The faster capabilities grow, the more deliberately societies must imbue them with resilience, building in protective layers that will ensure the technologies are safe to deploy at scale. Security. Robustness. Auditing. Recovery. Governance. These determine whether technological acceleration remains a source of broad benefit or becomes a source of brittleness vulnerable to attackers, accidents, or concentrated power.

That leaves us with the question: How do we make rapid AI progress resilient, ensuring we can capture its benefits without creating new fragilities?

Old problem

This isn’t the first time we’ve faced the question of how we should develop technologies with the potential for great good and great harm.

The precautionary principle is one classic response. It argues that, when a new technology has the potential to cause serious harm — even if concrete evidence of harm does not exist — we should take protective action. The strength in this approach is that it takes uncertainty seriously. The weakness is that it can become a blank check for halting progress or, worse, a way to concentrate power in whoever gets to define what counts as potential for serious harm. In AI, this could look like restricting who can train the most powerful systems, which may be reasonable at the frontier, where the stakes are highest. But applied broadly, the same logic can end up limiting access to tools that are already making science, medicine, and education more widely available. The challenge is drawing that line — and deciding who gets to draw it.

The Asilomar conference presented a different kind of response. It was convened in 1975 to discuss recombinant DNA, a then-new kind of genome editing. The technology raised real fears: What if engineered organisms escape the lab? Instead of waiting for regulators to act, researchers organized their own moratorium, developed safety guidelines, and created a framework for continuing the work with safeguards in place. It wasn’t perfect, but it showed that “keep moving while building guardrails” was a viable posture, not just a slogan.

Acceleration matters, but direction and defensive capacity matter as much as speed.

Both the precautionary principle and the Asilomar model assume to some extent that there’s a trustworthy authority capable of making the call on how to move forward with a new technology — some institution that can weigh the risks, set the rules, and enforce them. That might have worked 50+ years ago, but it’s not going to work today. According to a 2025 Pew survey, only 17% of Americans trust the federal government to do what is right “just about always” or “most of the time,” down from 73% in 1958, when the question was first asked in national surveys.

Ethereum co-founder Vitalik Buterin is currently developing a new framework for tech development that’s designed for a world where public trust in institutions is already low and unlikely to recover quickly. That means we could apply it to AI now — no need to wait for trust to rebuild before we start safely advancing it and other new technologies.

New solution

Buterin calls the framework d/acc, which stands for “decentralized and democratic, differential defensive acceleration.” The name is a deliberate response to other tech-world philosophies — Buterin coined it in an essay responding to Marc Andreessen’s “Techno-Optimist Manifesto,” and it plays off “e/acc” (effective accelerationism), an online movement that treats technological acceleration as the overriding goal. d/acc is a pushback: Acceleration matters, but direction and defensive capacity matter as much as speed.

Buterin’s point is this: A lot of public arguments about technology are stuck in a binary. One side says: accelerate. Progress solves problems, and delay can be costly. The other says: slow down. Institutions can’t keep up, and the potential downsides of runaway acceleration are too large. Both sides have a point. Both sides also miss what makes the problem hard. Progress is not a one-dimensional thing. It comes in different forms. Some technologies expand human welfare and agency with manageable risk. Others scale power faster than the systems that constrain them — eventually, safety and governance feel like an afterthought.

Instead of focusing on speed, d/acc urges us to think more about direction. “If you learn to drive carefully, you can go very, very fast,” Glen Weyl, economist and principal researcher at Microsoft Research, told Big Think. “So instead of arguing about acceleration or deceleration, it’s better to shift the emphasis to directionality.” Directionality forces a better set of questions. Not “do we move?” but “where are we going?” Not “can we build?” but “can we build in a way that stays steerable?” That is the space d/acc is trying to occupy.

Breaking it down

d/acc has four ideas packed into it, and each one matters.

Differential means being selective. Not everything should be accelerated equally. The idea — drawing on philosopher Nick Bostrom’s concept of differential technological development — is to accelerate the technologies that reduce risk and delay the ones that increase it. The order matters. Ideally, defenses arrive before the attacks they’re designed to stop. Fund the vaccine platforms before the next pandemic, not after. Get the biosurveillance systems working before the tools to engineer pathogens become cheap and widespread.

Defensive means favoring technologies that build resilience, ones that help protect rather than attack. The core question: Does this technology shift the offense/defense balance toward defense? In practice, this could mean investing in open-source vaccines that can be locally manufactured, so we’re not dependent on slow, centralized production when a pandemic hits. In cyber, it could mean things like zero-knowledge proofs — these let you prove you’re a unique citizen of a country without revealing which citizen you are, preserving privacy while still fighting fraud and spam. It could mean trusted hardware chips in phones that stay secure even if the rest of the device is hacked, preventing a single vulnerability from compromising everything. It’s innovation in safety.

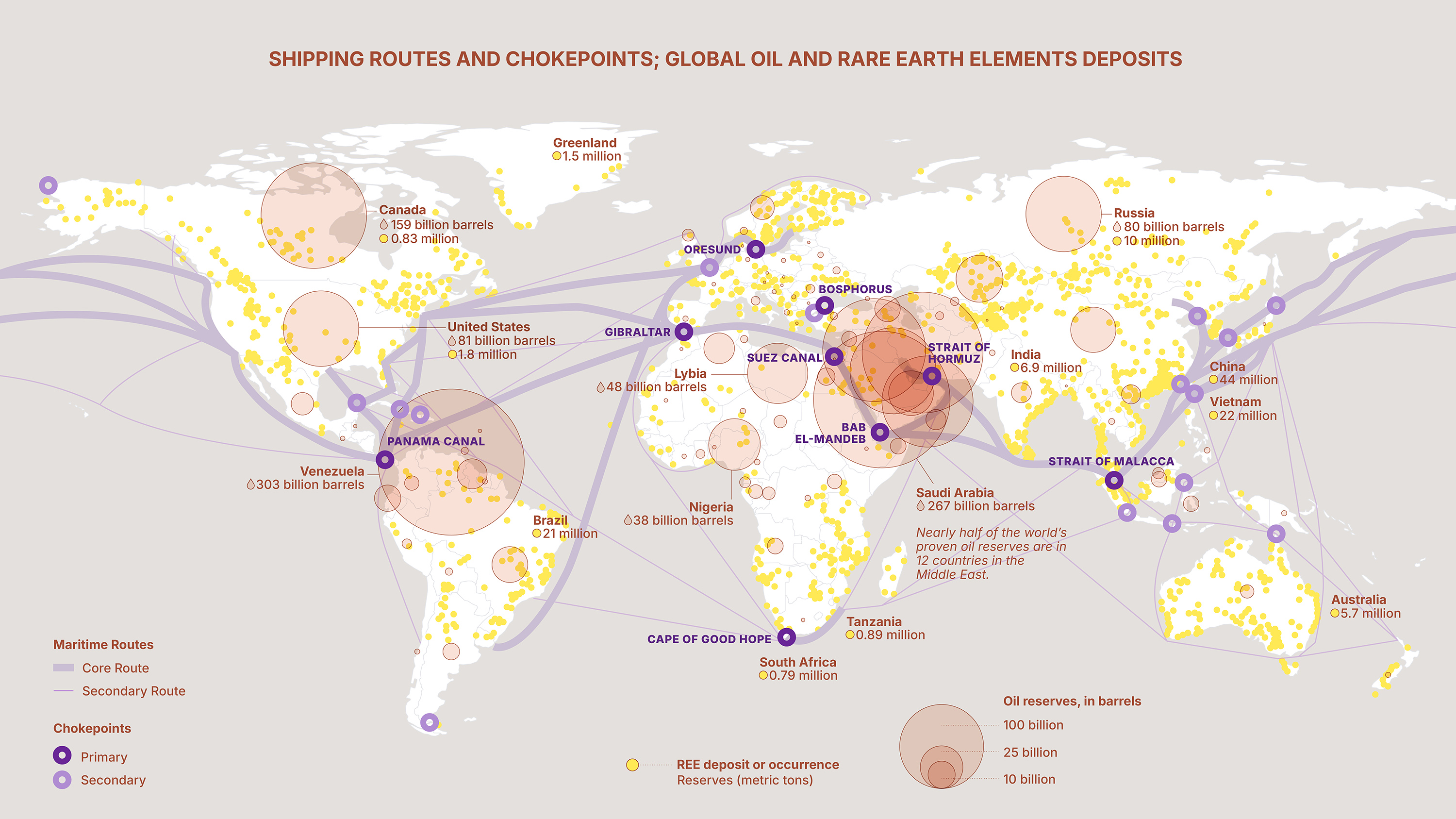

Decentralized is about reducing dependence on single points of failure or control, and prioritizing systems that don’t require everyone to trust one operator, one platform, or one bottleneck. This matters because, as Buterin put it in his 2023 essay on techno-optimism, “the same kinds of managerial technologies that allow OpenAI to serve over a hundred million customers with 500 employees will also allow a 500-person political elite, or even a 5-person board, to maintain an iron fist over an entire country.” In practice, decentralization means things like blockchains that let people transact without intermediaries who can freeze accounts or change the rules; communication infrastructure like Starlink, which kept Ukraine connected when centralized systems were targeted during the war with Russia; or social recovery wallets that protect your assets through a network of trusted contacts rather than a single company.

Democratic is about process. How are decisions about the technology made? Even in decentralized systems, rules will still be needed, including ones about deployment, access, standards, and enforcement. “Democratic” means those rules should be contestable and constrained, not set behind closed doors by a small group. A classic example of a democratic technology is the Linux kernel, whose rules for development, access, standards, and enforcement are public, contestable, and constrained by transparent process rather than set behind closed doors.

Put simply, d/acc argues that the goal is not to choose between speed and caution. The goal is to accelerate the benefits of technological innovation while also accelerating the protective layers that make the technology resilient.

The limitations

Of course, every framework has its edge cases, and the moment you try to put something into practice, they start showing up.

“Defense” is politically powerful language. Almost any actor can claim it. Surveillance can be sold as defense. Censorship can be sold as defense. Monopoly control can be sold as defense. “The ‘defensive’ framing doesn’t really cohere for me,” says Weyl. “Defensive of who, from what? I don’t find that distinction very coherent.” We need to establish a useful definition of “defensive” for this aspect of d/acc to hold.

Distributed systems have fewer choke points and fewer single points of failure than centralized ones, but they also make responsibility fuzzy. When something breaks, who fixes it? Who pays for maintenance? Who has the authority to step in when a risk stops being local and becomes systemic? This is the classic “tragedy of the commons” challenge.

This is where d/acc’s democratic instinct matters. The goal isn’t decentralization as an aesthetic, but decentralization that stays governable. People must be able to challenge and improve the technology without needing permission from a single gatekeeper, but more decentralization is not necessarily the answer — there’s a balance to be found and continuously maintained on what to centralize vs. decentralize.

If a system can be exploited, it eventually will be — by accident, by incentive, or by adversary.

Buterin himself has flagged a specific worry about advanced AI: If progress comes in sudden leaps rather than steady steps, the stakes could get high fast. A company’s small lead can suddenly become a huge, lasting advantage. The first group to hit a major breakthrough in such a powerful technology may pull so far ahead that others can’t realistically catch up. That gap could translate into enormous influence over markets, security, and politics.

AI is a genuine tradeoff case for d/acc. Diffuse power, and you risk a race between labs and governments deploying increasingly powerful systems with fewer safeguards. Centralize power, and you risk handing lasting control over a transformative technology to a small number of companies or states, with no mechanism to challenge or correct them. That’s why even for decentralization-minded actors, AI keeps showing up as an uncomfortable edge case.

Buterin’s proposed solution is layered. First, hold users, deployers, and developers legally accountable for harms caused by AI systems, treating them as the outputs of human decision-making rather than autonomous agents. “Liability on users creates a strong pressure to do AI in what I consider the right way,” he wrote in a 2025 follow-up to his techno-optimism essay. If liability proves insufficient, he suggests a more drastic fallback: the capability to implement a “soft pause” on access to industrial-scale compute. Reducing globally available computing power by 90-99% for a year or two during critical periods could buy time to prepare for anticipated impacts. This could be implemented through hardware registration, location verification, and chips requiring weekly authorization from multiple international bodies.

But Buterin’s longer-term vision isn’t about permanent restrictions — it’s about changing what we’re building toward. Instead of racing to create autonomous superintelligence, we should invest in tighter human-AI integration: brain-computer interfaces, augmentation tools, and feedback loops that keep humans genuinely in the loop rather than watching from outside. “It does frustrate me how a lot of AI development is trying to be as ‘agentic’ as possible,” Buterin wrote in August 2025, “when actually more avenues for human control not only improves results but also increases safety.”

How do you make this shift happen? Buterin’s proposals include liability rules that hold AI users, deployers, and developers accountable for harms, which would create pressure to build AI tools rather than autonomous agents. Beyond that, he advocates for decentralized funding as it can sustain open-source, human-centric AI projects that don’t fit traditional profit models. “Strong decentralized public goods funding is essential to a d/acc vision,” he argues, “because a key d/acc goal (minimizing central points of control) inherently frustrates many traditional business models.” The goal is to make human-AI collaboration tools as fundable and attractive as the autonomous systems currently dominating AI investment.

What’s at stake?

Almost all important technologies, including AI, can be used for both protection and harm. This is why d/acc can’t be a simple whitelist of “good tech.”

A recurring idea in d/acc conversations is that you can’t build safety on good intentions. If a system can be exploited, it eventually will be — by accident, by incentive, or by adversary. The question isn’t how to design for best-case users, but how to make systems hold up under worst-case pressure. “All bad things will always happen,” Joel Leibo, a research scientist at Google DeepMind, tells Big Think. “You have to design a system that will be robust to that.”

Technology is going to keep advancing, and d/acc isn’t built around the hope that we can prevent failure entirely. It treats misuse, capture, and accidents as defaults and asks a harder question: Can we build genuinely resilient systems, technical and institutional, where failures stay limited, recoverable, and correctable, rather than cascading system-wide? d/acc doesn’t have all the answers, but it insists on the right question: How do you get the benefits of acceleration without the brittleness? And it rules out the two easiest responses — “just go faster” and “just stop” — because neither actually works.

The right answer, if there is one, has to be more demanding. Accelerate the breakthroughs that expand what’s possible for human wellbeing. Accelerate the resilience, the protective layers that keep those breakthroughs safe to deploy at scale. And do both without turning “safety” into a single point of control that’s just as fragile as the risks it was meant to prevent. That’s a harder path. But the promise of technological progress was never that it would be easy. It was that it would be worth it.

This article is part of Big Think’s monthly issue The Roots of Resilience.